Malus.sh: AI, clean room and a controversial vision for the future of licensing

TL;DR

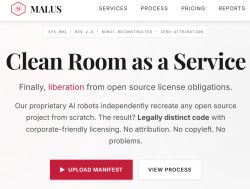

- Malus.sh is a satirical AI clean room recreation platform that claims to rewrite open-source software from documentation without accessing the original code.

- It uses a clean room process split into two stages and teams: one studies public documentation and behavior, and another implements the software from scratch.

- The licensing debate focuses on attribution, sharing changes, the AGPL, and the GNU General Public License, which can require derivative software to stay open.

- The site can actually rewrite a module, but its exaggerated framing is meant to highlight tensions between open source licensing and commercial interests.

The project focuses on licensing issues: the obligation to cite authors, the need to make changes available or the risks of licenses such as the AGPL and the GNU General Public License, which is sometimes referred to as 'viral' (viral) because it requires that any software that uses code under this licence also be made available under the same terms (i.e. with open code). The solution is supposed to be clean room - a technique that has been known for decades - which makes it possible to separate the 'idea' from its implementation and create an independent version of the software.

What is clean room? Clean room is, as the name suggests, a 'clean room' approach in which the software development process is deliberately separated into two stages and teams. The first analyses only documentation, system behaviour or public descriptions of operation (without access to the source code), while the second - separated 0 implements the solution from scratch, based only on these descriptions. This ensures that the resulting code is intended to be legally independent and not a copy or derivative work of the original.

Although the concept is based on a viable legal basis and the site actually works and can 'rewrite' the indicated module, the site is exaggerated and satirical. It was quickly recognised by the technology community as a satire that illustrates in an exaggerated way the problematic tensions between the open source world and commercial interests, especially in the context of using AI and circumventing licensing restrictions.

Is Malus.sh just innocent satire, or will this kind of AI 'rewriting' of code become a real challenge that the open source community will have to face? I invite you to discuss - what is your opinion?

Comments

"Tension" in this case means the impossibility of selling someone else's work that you got for free. This is indeed a big problem for companies :) As an entrepreneur, I wish I only had problems like... [Read more]

Where the 'clean room' approach used to require a lot of work, there are now claims that all you need is a good LLM agent system along with tests and you can generate your equivalent of a given library... [Read more]

In the world of AI, copyright no longer exists. The momentum is so fast that they are pulling in books, documentation, code as they fly without looking at the licences. I don't know where this will lead,... [Read more]

And I am reminded of a related problem - researchers have reproduced through LLM the content of the book "Harry Potter" 96% true to the original: https://arxiv.org/abs/2601.02671 This kind of problem... [Read more]

It's only been a few days since this topic was published, and a practical example has fallen into place to illustrate the problem I wrote about: The Claude Code leak from Anthropic and copyright... [Read more]

Interesting times, but as if they were stubborn, they could try to protect from the title of the architecture itself - the idea. But that's very hard to do, because anyone can create a recreational wo... [Read more]

As far as I know - the idea itself cannot be patented but only the 'implementation' / approach and this is based on a case from the 19th century [Read more]