Many of you are probably wondering how you can unleash the power of today's artificial intelligence and integrate it into your own projects and products. The most common form of access to LLMs, the popular chat window, is just the tip of the iceberg. Here, I will show how you can integrate modern multimodal models into your own system via a programming API. In this topic I will present an overview of the capabilities of such models, this will include processing of text, images and even audio and video.

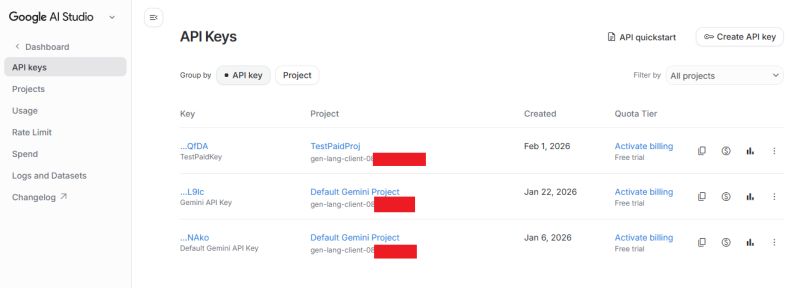

I assume we already have a Google account and are logged in at aistudio.google.com. You also need to have a payment system hooked up, although you can also use the trial version - Google gives out trial periods and start-up credits quite generously. You start by creating a project and a key - you need to have one in the Keys API:

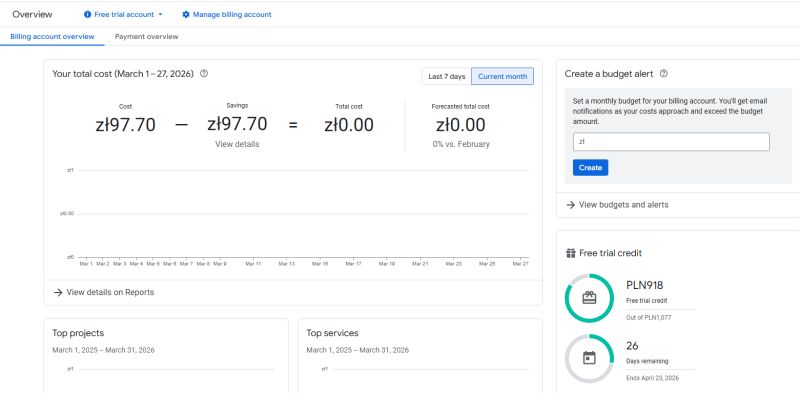

Our costs, in turn, are available in billing, and we need to keep a close eye on them there. Each model has a cost - I won't repeat it again, but it is easy to use up a lot of tokens.

For more information I refer you to Google help, here I want to focus on a presentation of what is even possible with today's AI.

Hello World

Here I have decided to use node.js together with a ready-made generative-ai package from Google. Someone might prefer Python, but I prefer the syntax associated with Java and C++. Let's start with package.json:

Code: JSON

Based on these, I will run the following demos. I'm assuming basic knowledge of node.js - we install the project via npm install. Anyway... lLMs themselves can help with this too.

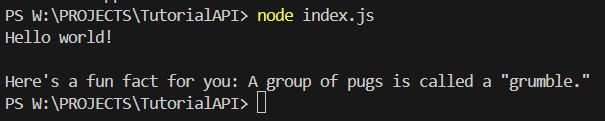

Hello World - text prompt

As "Hello world" I will run the simplest LLM model with a simple prompt. In the library used, it boils down to specifying the API key, selecting the model and sending the prompt:

https://github.com/openshwprojects/GoogleAIDemos/blob/main/helloWorld.js

The result:

Hello World - listing models

The second basic thing is to check the available models via the API. This will allow us to avoid guessing "blindly" which can be used.

https://github.com/openshwprojects/GoogleAIDemos/blob/main/listModels.js

Result:

- models/gemini-2.5-flash | Gemini 2.5 Flash

- models/gemini-2.5-pro | Gemini 2.5 Pro

- models/gemini-2.0-flash | Gemini 2.0 Flash

- models/gemini-2.0-flash-001 | Gemini 2.0 Flash 001

- models/gemini-2.0-flash-lite-001 | Gemini 2.0 Flash-Lite 001

- models/gemini-2.0-flash-lite | Gemini 2.0 Flash-Lite

- models/gemini-2.5-flash-preview-tts | Gemini 2.5 Flash Preview TTS

- models/gemini-2.5-pro-preview-tts | Gemini 2.5 Pro Preview TTS

- models/gemma-3-1b-it | Gemma 3 1B

- models/gemma-3-4b-it | Gemma 3 4B

- models/gemma-3-12b-it | Gemma 3 12B

- models/gemma-3-27b-it | Gemma 3 27B

- models/gemma-3n-e4b-it | Gemma 3n E4B

- models/gemma-3n-e2b-it | Gemma 3n E2B

- models/gemini-flash-latest | Gemini Flash Latest

- models/gemini-flash-lite-latest | Gemini Flash-Lite Latest

- models/gemini-pro-latest | Gemini Pro Latest

- models/gemini-2.5-flash-lite | Gemini 2.5 Flash-Lite

- models/gemini-2.5-flash-image | Nano Banana

- models/gemini-2.5-flash-lite-preview-09-2025 | Gemini 2.5 Flash-Lite Preview Sep 2025

- models/gemini-3-pro-preview | Gemini 3 Pro Preview

- models/gemini-3-flash-preview | Gemini 3 Flash Preview

- models/gemini-3.1-pro-preview | Gemini 3.1 Pro Preview

- models/gemini-3.1-pro-preview-customtools | Gemini 3.1 Pro Preview Custom Tools

- models/gemini-3.1-flash-lite-preview | Gemini 3.1 Flash Lite Preview

- models/gemini-3-pro-image-preview | Nano Banana Pro

- models/nano-banana-pro-preview | Nano Banana Pro

- models/gemini-3.1-flash-image-preview | Nano Banana 2

- models/gemini-robotics-er-1.5-preview | Gemini Robotics-ER 1.5 Preview

- models/gemini-2.5-computer-use-preview-10-2025 | Gemini 2.5 Computer Use Preview 10-2025

- models/deep-research-pro-preview-12-2025 | Deep Research Pro Preview (Dec-12-2025)

- models/gemini-embedding-001 | Gemini Embedding 001

- models/gemini-embedding-2-preview | Gemini Embedding 2 Preview

- models/aqa | Model that performs Attributed Question Answering.

- models/imagen-4.0-generate-001 | Imagen 4

- models/imagen-4.0-ultra-generate-001 | Imagen 4 Ultra

- models/imagen-4.0-fast-generate-001 | Imagen 4 Fast

- models/veo-2.0-generate-001 | Veo 2

- models/veo-3.0-generate-001 | Veo 3

- models/veo-3.0-fast-generate-001 | Veo 3 fast

- models/veo-3.1-generate-preview | Veo 3.1

- models/veo-3.1-fast-generate-preview | Veo 3.1 fast

- models/gemini-2.5-flash-native-audio-latest | Gemini 2.5 Flash Native Audio Latest

- models/gemini-2.5-flash-native-audio-preview-09-2025 | Gemini 2.5 Flash Native Audio Preview 09-2025

- models/gemini-2.5-flash-native-audio-preview-12-2025 | Gemini 2.5 Flash Native Audio Preview 12-2025

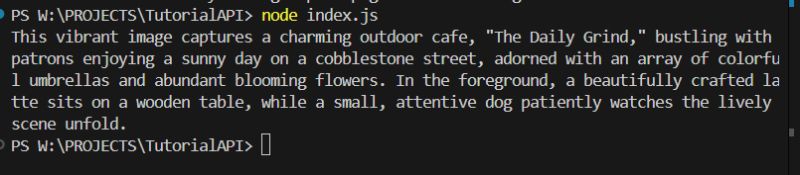

Image description (prompt + text)

Today's models, however, are multimodal, and can also describe images. Such images are attached here encoded by Base64. I have prepared an example image for testing:

The code gains a few extra lines to process the image. Prompt further is also up to us:

https://github.com/openshwprojects/GoogleAIDemos/blob/main/describeImage.js

Result:

The prompt can be changed as desired. For example for the command:

List living beings visible on photo

We receive a response respecting what we are asking for:

Based on the photo, here are the living beings visible:

1. **People** (numerous individuals seated at tables and walking in the background)

2. **Dog** (sitting in the foreground on the right)

3. **Plants/Flowers** (many potted plants with colorful flowers lining the street and decorating the cafe exterior)

Generating new images

Artificial intelligence from Google is also capable of creating images, however, select models are used for this. For example, the famous Nano Banana, with its various versions. Flash will not create an image for us. Internally, it is called gemini-2.5-flash-image. In addition to the choice of model, there is the same option as on Google's website, namely the choice of response modes - image only or text and image.

https://github.com/openshwprojects/GoogleAIDemos/blob/main/createImage.js

The created image:

Fits rather well with my description from the prompt, doesn't it?

Generating movies

Videos can be created in a similar way, the Veo interface is used for this. I wasn't able to get it to work in the same library as before, so for this example I used the related resource genai:

Code: JSON

It takes a little while to generate the video, and we get a link from the API to check the status of the work. Only then can it be saved.

https://github.com/openshwprojects/GoogleAIDemos/blob/main/createVideo.js

Result:

Converting text to speech

Google also offers the conversion of written text into natural-sounding speech. Several different voices are available, here I will use Kore's voice. One potential problem is that there is no WAV header in the returned data, but it can easily be added.

https://github.com/openshwprojects/GoogleAIDemos/blob/main/createSpeech.js

The result:

https://github.com/openshwprojects/GoogleAIDemos/blob/main/speech.wav

Photo editing

Nano Banana Pro has also become famous for its sensational photo editing capabilities - you can change objects, people and even add entirely new things. These functions too are available via the API. The photo, as before, is sent in base64 format and a response is received in the same.

https://github.com/openshwprojects/GoogleAIDemos/blob/main/editImage.js

Input photo:

Prompt:

Edit this image: replace the dog with a cat. Keep everything else exactly the same.Result:

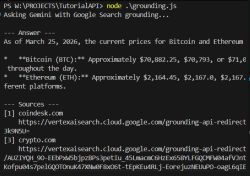

Grounding, or source-finding

Today's AI models can still hallucinate, but fortunately various tools, such as the search engine, can be made available to them so that they can provide more valid and reliable answers. Instead of relying solely on knowledge from training, it can refer to data we have prepared in advance or to those found on the internet. This reduces the risk of errors and so-called hallucinations. The search engine is plugged in via the "tools" object:

Code: Javascript

Result (abbreviated):

Description of the video

Another valuable application of AI could be to describe short film clips. I have generated an example video clip to try:

I added it to the AI query via the upload function. Full code:

https://github.com/openshwprojects/GoogleAIDemos/blob/main/describe_video.js

Video description from AI:

Uploading video to Gemini...

Uploaded file as: files/bd2tskdurfs3

Waiting for video to be processed...

.

Video processed! Asking Gemini to describe it...

--- Gemini's Description ---

The video opens with a stunning low-angle shot, focusing on an **orange tabby cat** walking slowly and deliberately directly towards the viewer down a narrow, ancient-looking cobblestone street.

**The Cat:**

* It is a medium-haired ginger cat, with distinct tabby stripes and markings across its body.

* Its fur is beautifully backlit by the strong, golden light, giving it a radiant halo, especially visible around its fluffy tail, which is held high and slightly curled.

* The cat has bright, observant greenish-yellow eyes and prominent whiskers.

* It walks with a calm, steady gait, its head held level, occasionally glancing subtly to its left or right but primarily looking straight ahead.

**The Environment:**

* The street is paved with irregular, dark cobblestones, with hints of green moss or grass growing between them, suggesting

age.

* Old stone or brick buildings, somewhat blurred due to the shallow depth of field, line both sides of the street, receding

into the background. These buildings are largely in shadow, further emphasizing the bright light down the center of the street.

* The overall setting gives the impression of a quaint European alleyway or historic town street.

**The Lighting:**

* The scene is bathed in a warm, intense golden light, strongly indicating either sunrise or sunset (golden hour).

* The light source is directly behind the approaching cat, creating a dramatic backlighting effect that makes the cat's silhouette pop and its fur glow.

* The cobblestones directly in front of the camera are also illuminated by this warm glow, highlighting their texture.

**Camera Movement:**

* The camera maintains a very low perspective, almost at ground level, effectively tracking the cat's movement.

* It performs a smooth, continuous forward tracking shot, keeping the cat centered in the frame as it steadily approaches.

* The shot maintains a consistent shallow depth of field, keeping the cat sharp while the background is softly blurred.

[inContentAd]

**Action:**

* The cat continues its measured walk towards the camera, gradually filling more of the frame.

* At approximately 0:00:07, the cat pauses briefly, looking directly at the camera, then slightly turns its head to its left (viewer's right) before the video concludes.

The entire scene is serene and picturesque, enhanced by the gentle background music (a soft piano melody).

I have reproduced the whole thing on the electronics video:

Description:

--- Gemini's Description ---

In this close-up video, a person is shown using soldering tools to remove a small component from a green circuit board. The circuit board is covered in numerous tiny golden squares and green lines, indicating complex circuitry. A dark, liquid substance, likely flux, is spread around the component being worked on.

At 0:03, a soldering gun is introduced into the frame from the top right, aiming at the component. The person uses the soldering gun to apply heat to the component.

At 0:07, a pair of tweezers is used to gently tap and move the component, ensuring the solder melts evenly.

At 0:13, the tweezers are momentarily removed, and the soldering gun continues to heat the component.

From 0:19 to 0:21, the soldering gun is moved around the component, ensuring all sides are heated.

At 0:28, the soldering gun is briefly moved away, revealing the component still in place.

At 0:38, the soldering gun is back in position, and a pair of tweezers re-enters the frame, now positioned to the left of the

component.

From 0:42 to 0:45, the tweezers are used to nudge the component from its left side, testing the fluidity of the solder.

From 0:52 to 1:29, the tweezers are continuously used to gently push and wiggle the component, slowly detaching it as the heat from the soldering gun melts the solder. The component rocks back and forth, indicating it's becoming loose.

At 1:30, the component appears fully detached from one side, and the person continues to heat and manipulate it with the tweezers to fully free it.

At 1:38, the component is successfully removed from the circuit board, leaving behind an empty space and the dark liquid flux. The soldering gun is then removed from the frame.

By the way, these descriptions are quite good....

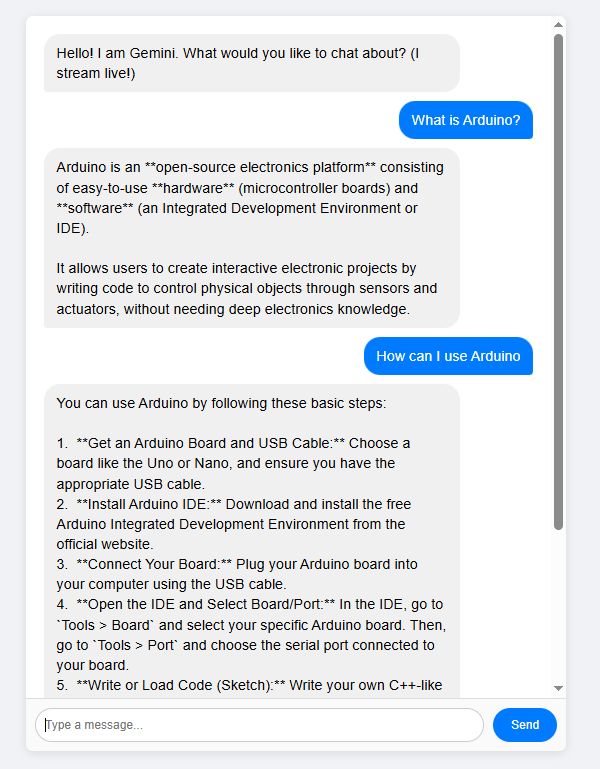

Example chat

Of course, you can also easily make a substitute for the classic chat, the kind that became popular with the entry of ChatGPT. A simple chat with an assistant. Here, it is only worth noting that when the AI creates a chat, a system prompt can be defined - it determines the behaviour of the assistant:

Code: Javascript

https://github.com/openshwprojects/GoogleAIDemos/blob/main/chat.js

The simplest chat does not support decoding of bold, code blocks, etc, but the rest of the behaviour is in line with what we know from official products:

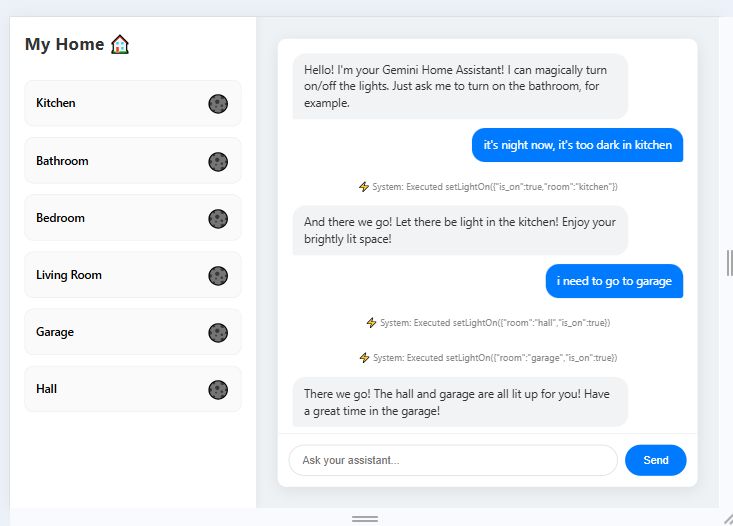

Individual tools for AI

Modern artificial intelligence is also able to use tools effectively. The interface we use has a dedicated solution for this, where we define a set of tools and the model then uses them at will. As an example, I made a simulated controller for the lights in a house:

Code: JSON

For this I gave a corresponding prompt to inform the role of the corridor. Full code:

https://github.com/openshwprojects/GoogleAIDemos/blob/main/homeControl.js

Result:

As you can see, the AI can understand the situational context and, for example, turns on the light in the corridor when I go to the garage, even though I have not written anything about the corridor.

Summary

This is the capability of today's AI models publicly available via APIs. All of this can be freely integrated into your projects, although you need to be aware of the pricing of the services (tokens), as you can quickly rack up large costs if used non-restrictively.

I based the presentations on this topic on Javascript with NodeJS, although you can just as well connect from Python or any other language there. Similarly, here I have relied only on models from Google, although various alternatives are available - for example from OpenAI or Anthropic.

If there are problems, the AI itself will help anyway - flipping or even reconstructing such simple examples is not difficult for modern models, this topic is more of a presentation of what is possible rather than how it should be done from a code level.

There is no doubt that today's artificial intelligence systems can process text and images correctly, and as you can also see, they can also cope with audio and video.

Do you use artificial intelligence via APIs in your projects, and if so, for what?

Cool? Ranking DIY Helpful post? Buy me a coffee.